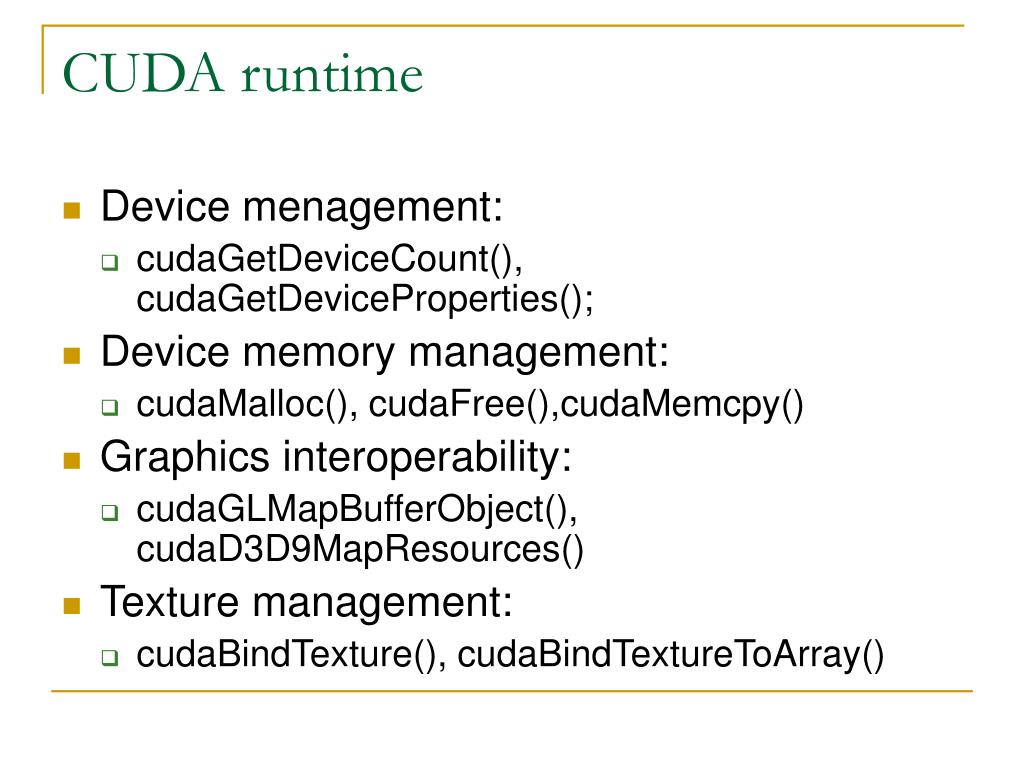

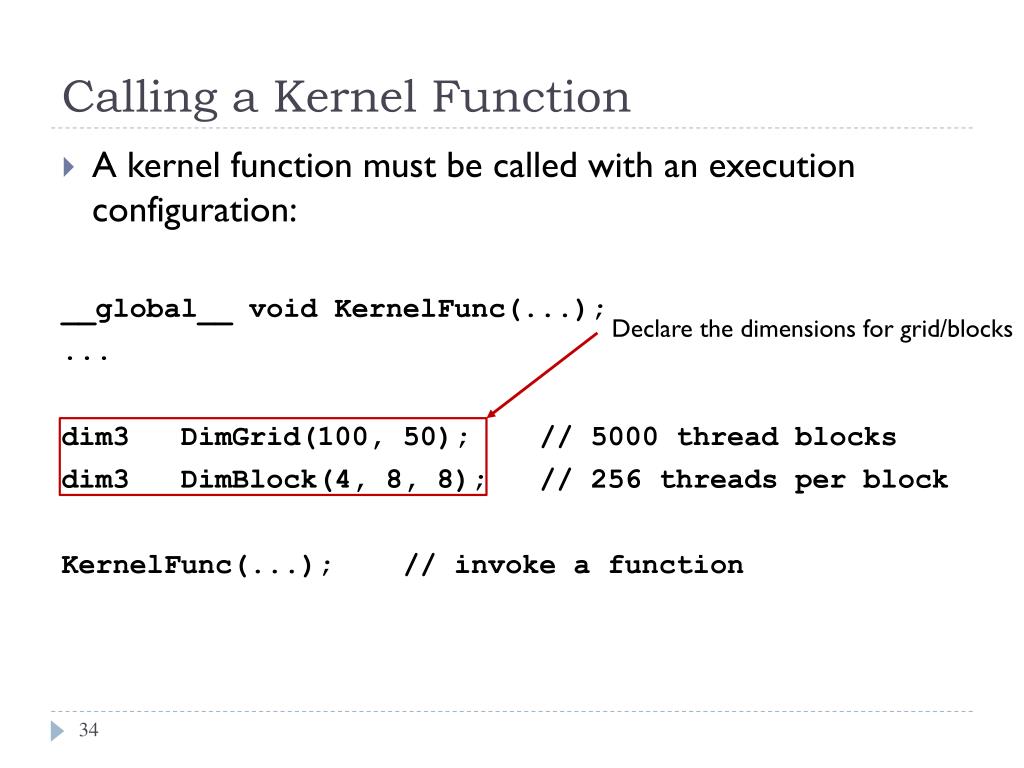

Can be a single integer to specify the same value for all spatial dimensions.Īn integer or tuple/list of 3 integers, specifying the strides of the convolution along each spatial dimension. the number of output filters in the convolution).Īn integer or tuple/list of 3 integers, specifying the depth, height and width of the 3D convolution window. Integer, the dimensionality of the output space (i.e. Public Conv3D(int filters, Tuple kernel_size, Tuple strides = null, string padding = "valid", string data_format = "channels_last", Tuple dilation_rate = null, string activation = "", bool use_bias = true, string kernel_initializer = "glorot_uniform", string bias_initializer = "zeros", string kernel_regularizer = "", string bias_regularizer = "", string activity_regularizer = "", string kernel_constraint = "", string bias_constraint = "", Shape input_shape = null) Parameters Type Malnutrition affects 60% of nursing homes patients and 40% of clinical patients. What does dim3 stand for in Clinical Nutrition?ĭim3 brings together a group of seasoned healthcare professionals and highly-skilled engineers to revolutionize the way clinical nutrition is managed from the hospital to home. The only facts to know about dim3 are: dim3 is a simple structure that is defined in %CUDA_INC_PATH%/vector_types.h It can also be used in any user code for holding values of 3 dimensions.

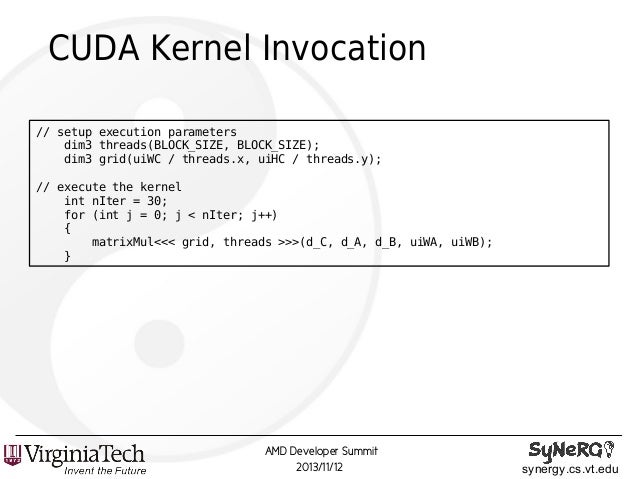

When defining a variable of type dim3, any component left unspecified is initialized to 1. dim3 is an integer vector type based on uint3 that is used to specify dimensions. However, the access pattern depends on how you are interpreting your data and also how you are accessing them by 1D, 2D and 3D blocks of threads.

When to use dim3 in block / grid dimensions? Its most common application is to pass the grid and block dimensions in a kernel invocation. Transfer results from the device to the host.ĭim3 is an integer vector type that can be used in CUDA code.Transfer data from the host to the device.Declare and allocate host and device memory.Because shared memory is shared by threads in a thread block, it provides a mechanism for threads to cooperate. Access to shared memory is much faster than global memory access because it is located on chip. Shared memory is a powerful feature for writing well optimized CUDA code. The multiprocessor occupancy is the ratio of active warps to the maximum number of warps supported on a multiprocessor of the GPU. The CUDA Occupancy Calculator allows you to compute the multiprocessor occupancy of a GPU by a given CUDA kernel. Each kernel is executed on one device and CUDA supports running multiple kernels on a device at one time. A kernel is executed as a grid of blocks of threads (Figure 2). A group of threads is called a CUDA block. What is block in CUDA?ĬUDA kernels are subdivided into blocks. In C code, dim3 can be initialized as dim3 grid = ĬudaDeviceSynchronize() will force the program to ensure the stream(s)’s kernels/memcpys are complete before continuing, which can make it easier to find out where the illegal accesses are occuring (since the failure will show up during the sync). dim3 is a simple structure that is defined in %CUDA_INC_PATH%/vector_types. Dim3 is an integer vector type that can be used in CUDA code.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed